- Jh123x: Blog, Code, Fun and everything in between./

- My Blog Posts and Stories/

- Linking Claude Code to Local LLMs using LM Studio/

Linking Claude Code to Local LLMs using LM Studio

Table of Contents

Introduction #

In this blog post, I will be going through how to set up Claude Code CLI to use Local Large Language Models.

Why Local Large Language Models? #

Using local LLMs is not for everyone.

Here are some pros and cons for you to consider if local LLMs are suitable for your use cases

Advantages of using Local LLMs #

- Privacy and Data Security: Your information is not sent to any online cloud service provider.

- Cost Savings: Over the long term, running the LLM on your machine will save you token cost.

- Unlimited Usage: When hosting a local LLM, you do not have to worry about API rate limits and other API related issues.

- Customization: You can customize the local model to your needs instead of depending on what service providers provide out of the box.

- Offline Usage: Local models can be used in air-gap environments when there is no internet connection.

- Vendor Lock In: You are not locked into any 1 type of LLM, usually tools that run local models supports a wide variety of models from different platforms.

Disadvantages of using Local LLMs #

- Large Upfront Cost: Powerful hardware is required to run the Local Models, especially ones that compete with frontier models like ChatGPT and Claude Opus.

- Setup Complexity: The setup process is quite involved and requires some technical knowledge to deploy, maintain and update.

- Performance Trade-off: Local models may be slower and less optimized than cloud models, this may result in slower performance.

- Limited model selection: You are locked in to models which have published their weights.

- Scaling Limitations: If your usage increase beyond the means of your hardware, it is more complex and will cost more to scale it up compared to using a cloud model.

Setting Up LM Studio #

Why LM Studio?

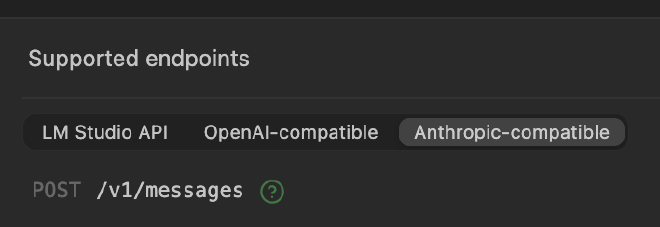

We use LM Studio here as it supports Anthropic Compatible APIs to make our setup easier.

Step 1: Download & Install LM Studio #

Download and Install LM Studio from their Official Website.

Go through the setup process and ensure that Developer mode is enabled.

This mode allows you to spin up a server on your local device where your other tools can connect to it.

If you missed it out during the initial config, you can go to the settings to create up.

Click on the settings button.

Turn on developer mode.

Step 2: Choosing a Model #

To choose a model, click on the Model Search Button

You can search for the models that you want to download and click on the download model to download it for use later.

Step 3: Turn on Local Server #

After you have downloaded the model in the previous step, we will need to start hosting the server in order to access the models.

Click on the Developer menu and click on the + Load Model button.

This should show a page where you can load models into memory

Select the model that you want to load into memory. Wait for the model to load into memory. After the loading is completed, you can move on to the next stop.

Once the model has completed the loading, it should show up as ready like the image above. After that, we can turn on the server using the switch above.

Take note of the IP address and the port for connecting to this server. You will need it for the next step when installing Claude Code.

After this step, the local LLM server is working, next step is to start linking Claude code to use this LLM Server.

Extra Step (For those who want to access it from another computer) #

This extra step is for those who are hosting the LLM on a different computer compared to the one they are working on.

After turning on the server, click on the Server Settings button and adjust the settings here.

To serve it over the local network, turn on the Server on Local Networks button toggle.

Also take note of your IP address that shows up, that is the IP address you should use for the configuration.

Setting Up Claude Code #

We have set up the Local LLM side of things from the section above. In this section, we will be setting up Claude Code to run with our Local LLM server.

Step 1: Download Claude Code #

Go to the quick start guide for Claude Code here.

Follow the install method for your device and download the CLI.

In this tutorial, I will be using Windows for the Walkthrough. For the other operating systems, the install process should be quite similar.

Copy the command that is required for the installation and install it.

Step 2: Setup Environment Variables #

After installing Claude Code, Do not launch it yet. Before we launch Claude Code, we will have to set it to point to our Local LLM Server.

Claude Code uses 2 different environment variable that helps us to do this.

# Or the IP address

set ANTHROPIC_BASE_URL=127.0.0.1:1234

# Can be anything if you are running locally

# Must be the API Key you have set

# if you are hosting on another computer.

set ANTHROPIC_API_KEY=lmstudio

We will need to set the 2 environment variables before we start up Claude Code. Copy the command above if you are running on windows

For Linux / Mac Users, you can add the environment to your ~/.bashrc or ~/.zshrc file to keep the settings permanently.

Otherwise, you can simple run these 2 commands before starting Claude Code.

# Or the IP address

export ANTHROPIC_BASE_URL=127.0.0.1:1234

# Can be anything or the API Key you have set

# if set any in the above step.

export ANTHROPIC_API_KEY=api-key-here

To view the full list of exposed environment variables, you can refer to their documentation

Step 3: Run Claude Code #

After setting up the relevant environment variables, you can run the claude command.

If the claude command is not found, you can run C:\Users\<username>\.local\bin\claude.exe instead for the full path to the executable.

Follow the step by step guide and set up the CLI to your preference.

If you have set it up correctly, it should go into claude code directly. Otherwise, you can take a look at the troubleshooting step below.

Trust the workplace if you want to start editing with Claude Code.

If you are running a local LLM, it may show this prompt that says that the key is not recommended. You can still use the API key as it will be routed to our local instance eventually.

Claude is now set up correctly! You can start typing a query and you can see LM Studio start running the LLM to answer the queries.

Once you type in some queries into Claude code, you should be able to see the model working in LM Studio.

Step 3a: Alternative method to run Claude Code #

If you are having issues, you can navigate to ~/.claude.json.

- On Linux / Mac, you can navigate to

~/.claude.json - For Windows, you can navigate to

C:\Users\<your-user>\.claude.json

{

// Add this section into the `claude.json` file

"env": {

"ANTHROPIC_BASE_URL": "http://127.0.0.1:1234",

"ANTHROPIC_API_KEY": "your-api-key"

},

// Other Keys

}

If Claude Code is not reading the configuration correctly, you can add the 2 environment here instead

After saving the .claude.json file, you can start claude again and it should not require you to login again.

Conclusion #

In this tutorial, we went through how to set up a local LLM and set it up for them to work together with Claude code. It covers Windows but it should also work with Mac OS and Linux.